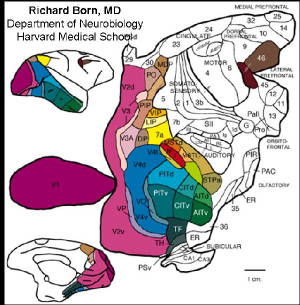

| Map

of Visual Cortical Regions |

|

| from Richard Born lab, Dept. Neurobiology,

HMS |

Clearly the vast

majority of vertebrate information processing

is done at the "subconsicous" level. Not only are we not

aware of the detailed neuronal firing patterns in (e.g.)

visual cortical areas V1, V2, V4, MT, etc., etc., we indeed

have no apparent way of ever consciously "accessing" this

information.

Our "consciousness experience" clearly has

access to the highly processed results of "lower level" (??)

information processing, and can operate in the

linguistic-thought realms, but IS information processing per

se done at the "conscious level"? If so, how much

information is processed at this level?

Taking a pragmatic view that our thoughts

have some mechanistic function (as

opposed to pure epiphenomenalism), we can ask what substantial

tasks our thought processes play and how this relates to

things that are obligately "conscious" thought processes

in the sense that if we don't pay conscious heed to them, we

cannot perform them, e.g. I could not write this text absent

some dedication of "conscious" or attentional resources.

[this question is relevant even in the epiphenomenalist's

view, assuming that the neural processes underlying the

epiP. "sensation" of conscious info processing

reflects neural processes distinct from those that do

not reach the level of conscious experience or

awareness].

But what are obligate

self-conscious processes?

There are certain mental operations that

require "deliberate focused effort" in order to be

accomplished. This immediately ties into attentional

mechanisms and may relate to the so-called "neural correlates

of consciousness" purveyed by Koch and co-workers. This

attentional system might be viewed as a quite limited RAM memory into which we can read

and write-- a sketchpad if you will whose capacity is vastly

inferior to our RoM (memories). The extent of our

sketchpad is reflected by the extent of concepts or numbers

that we can hold at one time (7 or 8 or so) reflecting just a

few bytes of information, which seems intellectually puny--

especially given that our operations in this sketchpad are

largely serial in nature. So how did we become Masters

of the Universe with a sketchpad that the simplest pocket

calculators would laugh at? This is one of the deeper

mysteries of neuroscience and relates directly to the issue of

conscious vs. subconscious processing of

information.

We

have something that no extant or practically envisioned

computer can or will have: massively diverse and highly

specific and vast neural interconnections and

algorithms which abstract higher order patterns from our

worlds. We also have powerful learning algorithms

supported by the horrendous complexity of the cortical column,

which is itself just a simple processing unit that contributes

to the horrendous complexity that is

cortex-thalamus-cerebellum, as guided by reward-based

limbic mechanisms. It is the details of these

architectures that allow us puny sketch-pad creatures to be

Masters of the Universe-- for now. The issue is not

whether or not such skills can be implemented in silico, but

rather the big issue is this: can in silico creatures

(DEs and the like) be made vastly more powerful because their

sketchpads can operate in the terrabyte range vs. the human

handful of bytes range? The answer to this question lies

in understanding exactly how conscious information processing

interfaces with the subconscious. Is there

something in the nature of this information processing

structure that formally precludes massive scale

up?

Peter Somogyi

recently presented a detailed accounting of hippocampal

microcircuitry (CSHL, 2006 meeting: neuronal circuits:from

structure to function). He focused on just a few of the

16 defined types of hippocampal interneurons. The gist

of this talk eluded me, but it did seem that there were

complex phase relationships between different neuronal

subtypes and the ongoing cortical electrical rhythms, which

play a dominant role in our conscious levels of information

processing. One view of anesthesia is that it

represents a fragmentation of the normally cohesive intra- and

inter-hemispheric neural activity patterns (George Mashour,

Senior Anesth. resident of MGH in spring 2006).

Thus the issue becomes how the details of the hippocampal

microcircuit (or cortical column) working in massive tandem

via global mechanisms that tie all brain regions together

(unless fragmented by anesthesia or a bump on the head)

combine to produce this "puny

sketchpad". [note: physicist's description

of scale-free network dynamics may be relevant to this system;

check with Armen Stepanyants for contact info].

The gist here is that the puny sketchpad is

perhaps just a tip of a much more massive subconscious

sketchpad that is performing triage on candidate words, ideas

and judgments and filtering the best candidates up to the puny

sketchpad for final deliberations/decisions. Our

ability to recall only short sequences of digits is thus

highly misleading: this narrow

application/result masks the true purpose and

complexity of this system. None of this materially

addresses the question of whether the conscious sketchpad

actually does ANY information processing (from either

materialist or epiP views), but the larger point has already

been made: the complexity of the neural structures at

the local and global levels is what gives rise to our

cognitive capabilities. The issue of

conscious IP will have to rest on the back burner of my

subconscious for now.

TBA:

Shannon information theory -- vs. thought-info

disconnect.

1/ln(P) view of information seems inapplicable

Consc. as an attention-focusing mechanism.

[relates well to Consc. as vertebrate

dec. making device]

| good source for idle meanderings |

|

|

The answer to the Semantics problem [How do we get

Semantics from Syntax] seems simple enough to me:

Semantics Comes from

Experience. This sounds obvious once

stated, although I could not find any prior claim that this is

so in a casual google search**; and I have certainly not

scoured the vast neurophilosophical literature for this

phrase. This statement might be trivialized to mean "we

learn stuff and then it has meaning", but that misses the

point. The point is that the syntax comes to have

meaning as we build it into information processing structures

that are interfaced to motor and reward systems. In some

cases (instinct) the experience is that acquired over

evolutionary time and so we know that a moist tit is a

good thing absent our own Personal experience.

Just as a larval zebrafish knows that a paramecium is a good

think to track and eat based on the barest amount of visual

information with which it had no prior experience (McElligott

and O'Malley, 2005). This is something quite beyond Eric

Baum's view of inductive bias channeling our learning

abilities to suit specific needs, true though that may

be. Pure instinct is devoid of any individual learning:

it is coded into our genome and expressed via the process of

neural development.

Dennett and

Dretske have had lively exchanges on the nature and role

of "meaning", but it seems to be just another way of

discussing the semantics from syntax problem. Here is

how you build sentient machines: build digital architectures

equivalent to our cortical columns and cortex and give them

experiences, like you would any human infant. An

alternative approach is the chip-replacement man, where you

substitute into my brain perfect neuronal replicas, one neuron

at a time, until I am wholly artificial. In this case,

you have not given me any new experiences, just replaced my

biological memory core with a digital one. Ultimately it

seems like things "mean" something to us because we are

conscious of their "meaning", like the smoke alarm beeping

telling me I should stop writing now, but Bayes' rule applies

and it is all but action potentials and probabilities-- it is

all syntax...syntax that means something. This is just a

re-statement of the core problem of "what is consciousness",

we cannot solve one problem without solving the

other.

Stuff in the world

has meaning because we are conscious of that stuff and we

have experiences that give that stuff meaning...absent

consciousness there is no meaning, or at least no meaning of

which we can be aware, because we are not aware.

This does not rule out the idea of even quite simple

animals having both consciousness and meaning.

** The only instance of "semantics comes from experience"

that I found on Google was in a Java distance-learning course,

and while this has nothing specifically to do with the

mind-body problem, it is indeed quite relevant to the

discussion at hand. In this posting by a Kenneth A.

Kousen (aka gunslinger), he says that you can best learn Java

syntax by reading many examples. In our case we argue

that the syntax (labeled lines of sensory information;

rewarding vs. aversive stimuli, temporal coincidences encoded

by STDP, or LTP as it used to be called) leads to complex

relationships. I know that the whee-uuuu, whee-uuu that

I just heard was not a fire alarm in my bldg but rather a

siren somewhere down Huntington avenue in Boston. I know

this not just because I've seen ambulances going whee-uuuu

down the street, but because I know what wheels and roads and

hospitals and bodies and doctors and more all are. The

really interesting thing here is not YOUR brain-- it is the

brain of your infant son or daughter. This is where

syntax becomes semantics-- where the first semantics of walls

and floors and sharp and soft and wet and dry and pain and

hunger and warmth begin to emerge out of the cacophony of

sensory stimuli, internal signals and temporal

relationships. What I want to know is how the infant's

cortical columns work and how these columns talk to one

another, as informed by thalamic gating and intermittent

reward systems. This has nothing to do with language

because language is a good year away at this point; maybe 2 or

3 depending on the infant. I can only imagine the

wonderful pleasure that my little Liam experiences at those

calm gentle moments when he leans against me and gurgles with

joyous content.

Semantics Comes from

Experience -- Java style

...

The issue of Consc. vs. Subconsc. IP (information

processing) is curious in the realm of trying to probe deeper

into one's IP activities, i.e. in terms of getting deeper into

the substance and details of that material that produces

thought.

My sense is that there is

an information "firewall": you can hone in on certain sensory

experiences, but you cannot hone in on whether this

information is being processed in the olfactory bulb or

inferior colliculus: you can say nothing about the locus of

this processing even if you sink into the deepest depths of

meditation where you are able to control heart-rate and

metabolic functions: you can control these things but you

cannot order action potentials to traverse the trigeminal

nerve rather than the vagus nerve. This firewall

sets the boundaries between subconsc. vs. consc. IP.

Even if all thought and decision making are epiP, they are

still the result of information processing by the

structure(s) that make information processing in these neural

realms possible. We can experience these aspects of

information processing, yet we are shut out from the other

(lower?) realms of subconsc. IP. Possibly, exploration

of this avenue may lead to insight into how these two realms

interact-- and possibly this may lead towards those

core neural ingredients (anatomical structures

and activity patterns) that allowed this particular

set of hominids to develop tool making, language and culture

to its current state. When machines can have such skills

and do such things, they will have what we have plus

memories and processing speeds that compare to us, in the

same manner that we compare to the CNS of the larval

zebrafish or to some tiny ant. Since computational

neuroscience, Blue Brain and Allen brain projects and the like

are going to move forward regardless of what I do, I should at

least paint a detailed enough canvas of this future that at

least SOME HUMANS, in positions of authority, will consider

the nature and imminence of this problem.

Consc. vs. Subconsc.

Decision Making.

The process

of decision making seems to be intimately associated with

consciousness in humans, although we can say nothing about

whether or not dogs or chimps engage in "conscious decision

making" (which is something the materialists should seriously

choke on!). But in humans, we have the experience of

reflecting upon things (slow decisions) and changing

directions in various situations (fast decisions) and lots of

other variations where we are thinking about things and

decisions happen. The role of consciousness in these

decisions is hard to pin down, but as noted at the bottom of

the What it is Like to be a Chair page,

being in a conscious state is essential to making good

decisions. Certainly, the idea of making

"Decisions" is fuzzily defined, in that knee jerk or escape

"reflexes" would be viewed by most as involuntary and

therefore non-decisional, whereas "deciding what TV channel to

switch to" would be considered more voluntary. As I

don't have a handy solution to the free will-automaton problem, we

will instead adopt the convention that if a choice need to be

made (e.g. whether to flee or attack; which prey item to

strike), then a decision has been made, free will or no free

will. [Do machine using complex logic circuits imbued

with countless stochastic elements (like our neurons) have

free will? Not my yob!]

The next question is do we make

involuntary decisions. For example, in regards to the

what it is like to be a chair page,

decisions were made that were perhaps instantaneous (I won't

say in case you have not yet read the page). Indeed

things that were at one time "conscious decision" can seem to

become subconsc. in that we give no conscious thought or

effort to them. This is most apparent perhaps in the

motor realm, although there are also purely mental decisions

that can be made that require no motor activity. This is

yet another clue perhaps into the murky question of what it is

that consciousness is. At some point consciousness is

necessary for some decisions, and later on it becomes rote and

subconscious. How might this happen. Moreover,

certain decisions seem to have a level of complexity that

they would never become subconscious (like deciding

which jobs to apply for, in case anyone from my work

ever reads this). But maybe even the

most "complex decisions" can become rote and subconscious

if you do them often enough?

In this vein,

consciousness is used to make decisions about new things and

new combinations of things, and when things achieve a

sufficient degree of roteness, they drop into the subconscious

realm. This is interesting because it ties

into Paul Adams view (syndar.org) that a special

kind of learning (the highest level I might say, given my lack

of understanding of his work) occurs only very

infrequently and it is for exactly these kinds of tasks that

the mechanism of conscious, focused attention becomes

involved. Adam's synaptic learning involves very

specific kinds of interactions between circuits in different

cortical layers that extract higher order statistics from

sensory and prior experiences. Perhaps these kinds of

activity have a special relationship to consciousness and/or

attention?

alternatively: dm is subconsc. and consc. only steers

attention?

|